As I’ve been thinking about quilt ideas related to security and privacy during my staybatical at the STUDIO for Creative Inquiry all year, the title for this quilt was obvious: Security Blanket. Less obvious was the design of a quilt that would fit this title. Ultimately, I took inspiration from the research on the security and usability of text passwords that I’ve been working on with my students and colleagues. While this quilt started out as an art project inspired by my research, what I learned from creating it will likely influence my future password research.

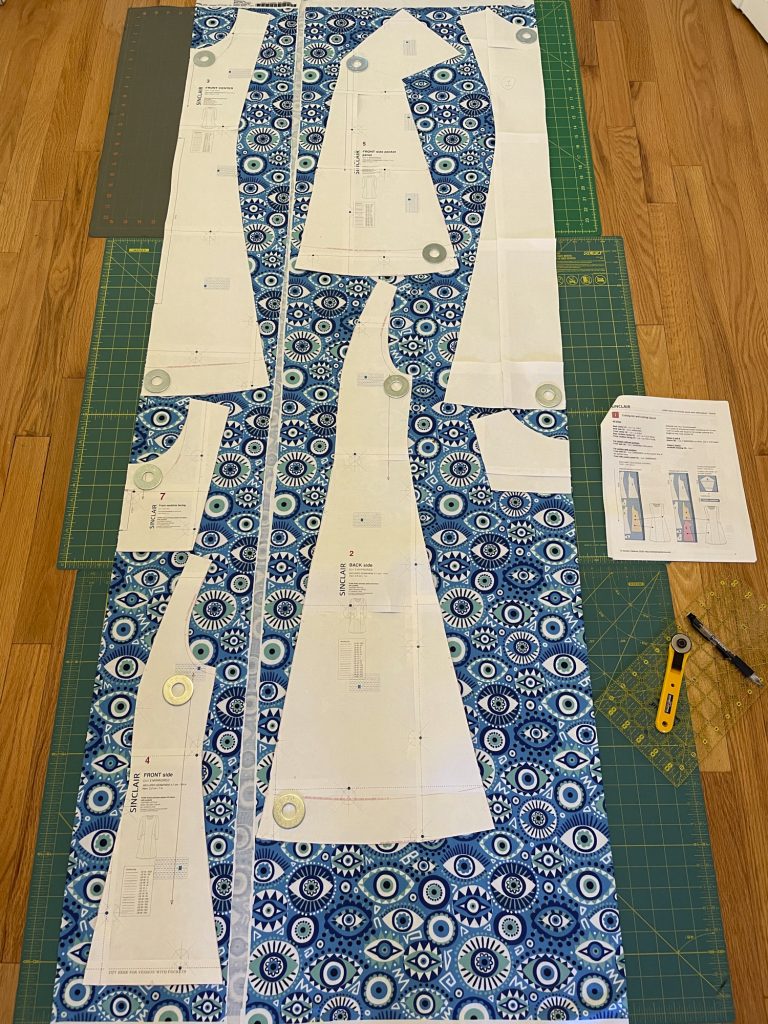

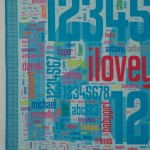

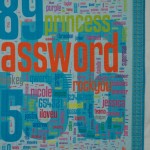

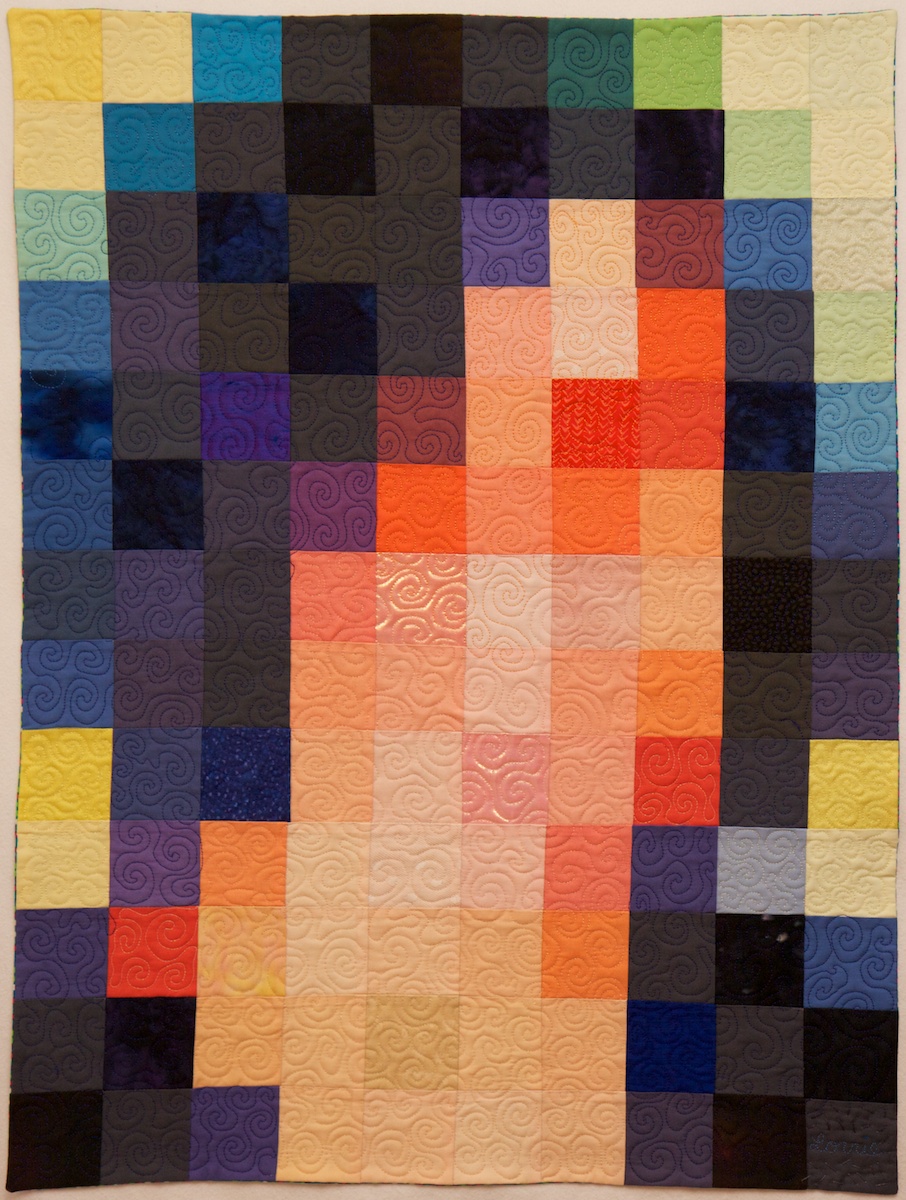

Security Blanket, machine quilted, digitally printed cotton fabric, 63.5″x39″

Our research group has collected tens of thousands of passwords created under controlled conditions as part of our research. Among other things, we have compared these passwords with the archives of stolen passwords that have been made public over the past few years. Perhaps the largest such archive consists of 32 million passwords stolen from social gaming website RockYou and made public in December 2009. These passwords are notably weak, having been created without the requirement to include digits or symbols or even avoid dictionary words. Security firm Imperva published an analysis of these passwords. More recent analyses of stolen passwords have found that passwords stolen in 2012 are pretty similar to those stolen in 2009.

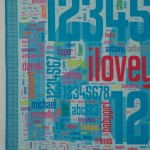

The media had fun publishing the most common passwords from the RockYou breach. As with other breaches, password and 123456 figured prominently. But after you get past the obvious lazy choices, I find it fascinating to see what else people choose as passwords. These stolen passwords, personal secrets, offer glimpses into the collective consciousness of Internet users.

I asked my students to extract the 1000 most popular passwords from the RockYou data set and provide a list to me with frequency counts. I then went through the list and sorted them into a number of thematic groups. I assigned a color to each group and entered the passwords with weights and colors into the Wordle online word cloud generator. I then saved the output as a PDF and edited it in Adobe Illustrator to rearrange them in a shape that I liked, with some pairs of words purposefully place in close proximity. I designed a border, and had the whole thing printed on one large sheet of fabric by Spoonflower. When the fabric arrived, I layered it with batting and quilted it. I bound it with matching fabric from Spoonflower that I designed.

I asked my students to extract the 1000 most popular passwords from the RockYou data set and provide a list to me with frequency counts. I then went through the list and sorted them into a number of thematic groups. I assigned a color to each group and entered the passwords with weights and colors into the Wordle online word cloud generator. I then saved the output as a PDF and edited it in Adobe Illustrator to rearrange them in a shape that I liked, with some pairs of words purposefully place in close proximity. I designed a border, and had the whole thing printed on one large sheet of fabric by Spoonflower. When the fabric arrived, I layered it with batting and quilted it. I bound it with matching fabric from Spoonflower that I designed.

Sorting 1000 passwords into thematic categories took a while. While a number of themes quickly emerged, many passwords could plausibly fall into multiple categories. I tried to put myself in the mindset of a RockYou user and imagine why they selected a password. Is justin the name of the user? Their significant other? Their son? Or are they a Justin Bieber fan? Is princess a nickname for their spouse or daughter? The name of their cat? Their dog? (It shows up frequently on lists of popular pet names and a recent surveyfound that the most common way of selecting a passord is using the name of a pet.) Is sexygirl self referential? What about daddysgirl? dreamer? genius?

When I didn’t recognize a password I Googled it. Most of these unknown passwords turned out to be ways to express your love in different languages. For example, I learned that mahalkita means I love you in Tagalong. Love was a strong theme in any language; there seems to be something about creating a password that inspires people to declare their love.

Not surprisingly, the top 1000 passwords list includes a fair share of swear words, insults, and adult language. However, impolite passwords are much less prevalent than the more tender love-related words, appropriate for all audiences.

There are a couple dozen food-related words in the top 1000 passwords. The most popular is chocolate and most of the others are also sweets (and potentially nicknames for a significant other), but a few fruits and vegetables, and even chicken make their way to the top as well. Among fruits, banana appears in both singular and plural.

Animals are also popular. While felines appear on the password list in a number of forms and languages, monkey is by far the most popular animal, and the fourteenth most popular password. I can’t quite figure out why, and I don’t know whether or not this is related to the popularity of “banana.”

Fictional characters are also popular, especially cartoon characters. The twenty-fifth most popular password is tigger (which might also be on the list because it is a popular name for a cat). A number of super heroes and Disney princesses also make the list, as well as another cartoon cat, hellokitty. Real life celebrities also make the list, including several actors and singers. While at first I thought booboo might refer to the reality TV star Honey Boo Boo, I realized that the date of the password breach predates the launch of that TV show.

A number of passwords relate to the names of sports, sports teams, or athletes. Soccer-related passwords are particularly popular. There are several cities on the list that I’m guessing were selected as passwords because of their sports teams, especially soccer teams.

Besides the obvious lazy password password, and also PASSWORD, password1, and password2, some more clever (but nonetheless unoriginal) variations included secret and letmein. And I love that the 84th most popular password is whatever.

Some passwords puzzled me. Why would anyone select “lipgloss” as their password. Why not “lipstick” or “mascara”? Perhaps it refers to a 2007 song by Lil Mamma? Why “moomoo”? Why “freedom”?

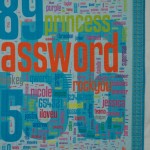

Even more popular than the word password were the numbers 123456, 12345, 123456789. Other numbers and keyboard patterns also appear frequently. When I laid out the 1000 passwords on the quilt, I scaled them all according to their popularity. The most popular number sequence was chosen by more than three times as many people as the next most common password and was so large that I decided to place it in the background behind the other passwords so that it wouldn’t overwhelm the composition.

I made a few mistakes when designing the quilt that I didn’t notice until I was quilting it (quilting this quilt provided an opportunity to reflect on all the passwords yet again as I stitched past them). One problem was that when I transferred the top 1000 password list to Microsoft Excel while categorizing the passwords, the spreadsheet program removed all the zeros at the beginning of passwords. As a result there are three passwords that are actually strings of zeros (5, 6, and 8 zeros) that are printed simply as 0. In addition there are three number strings that start with a 0 followed by other digits are printed without the leading 0. Another problem was that the color I selected for jesus, christian, angel, and a number of other religious words blended in with the background numbers when printed on fabric, making those words almost invisible (even though they showed up fine on my computer screen). I had carefully checked most of the colors I used against a Spoonflower color guide printed on fabric, but had inadvertently forgotten to check this particular color. I reprinted about half a dozen of these words in a darker color and sewed them onto the quilt like patches that one might add to repair a well-worn spot.

There are also some passwords that I colored according to one category, and upon further reflection I am convinced more likely were selected for a different reason and should be in a different category, but we’ll never know for sure. I invite viewers to discover the common themes represented by my color-coded categories and to speculate themselves about what users were thinking when they created these passwords. Zoom in on the thumbnail images above to see all of the smaller passwords in detail.

The colors, size, and format of this quilt were designed to be reminiscent of a baby quilt, which I imagine might become a security blanket. Like the passwords included in this piece, a security blanket offers comfort, but ultimately no real security.

I asked my students to extract the 1000 most popular passwords from the RockYou data set and provide a list to me with frequency counts. I then went through the list and sorted them into a number of thematic groups. I assigned a color to each group and entered the passwords with weights and colors into the

I asked my students to extract the 1000 most popular passwords from the RockYou data set and provide a list to me with frequency counts. I then went through the list and sorted them into a number of thematic groups. I assigned a color to each group and entered the passwords with weights and colors into the

Google’s approach is both clever and (with apologies to

Google’s approach is both clever and (with apologies to